Revitalizing Earned Value Management Systems (EVMS)

Quick Summary

- Regulatory changes and updated standards are creating an opportunity to revitalize EVM Systems. The FAR overhaul, revised agency thresholds, and the EIA-748-E streamlined requirements while reinforcing the continued need for an effective EVMS.

- Organizations have an opportunity to refocus on value-driven EVM practices. Rather than treating EVMS as a check-the-box requirement, this is an opportunity to renovate bloated processes and remove non-value-added activities to establish a flexible “living” system that supports proactive project management and credible forecasting.

- BI and AI tools can transform EVM data into a real-time decision-making advantage. When supported by reliable, integrated data, these tools can rapidly organize information, improve visibility, identify risks early, and help project teams respond faster to changing priorities as well as technical, schedule, and cost challenges.

With the recent changes in the government regulatory requirements, the publication of the EIA-748-E Standard for EVMS, and evolving Business Intelligence (BI) and AI tools, the components for revitalizing Earned Value Management Systems (EVMS) are falling into place. This is an opportunity to refocus on the original purpose of an EVMS and effective use of real-time EVM data to quickly address problems before they become critical.

As highlighted in a previous blog, “Earned Value Management (EVM): How Much is Enough?”, being merely “compliant” with the EIA-748 Guidelines should not be the goal. That strategy fails to take advantage of the benefits of an EVMS; it is also short-sighted. Too often an EVMS is perceived as a contractual check-the-box exercise or focused on detailed score keeping.

The goal should be about being efficiently expert at EVM; a commitment to become “best-in-class” as expert practitioners of EVM. Following this strategy, an organization’s EVMS is actively maintained and used to ensure it provides relevant, useful information needed to manage projects for success. EVM is a powerful project management methodology that integrates scope, schedule, and cost management to provide a clear picture of project performance, the forecast completion date, and estimate at completion. BI and AI tools are enhancing the ability to rapidly organize and analyze real-time EVM data for proactive management and clear transparent communication with the customer. This also aligns with the need for speed in delivering capabilities to the customer when trade offs between requirements, schedule, and cost must be made.

Trimming Contractual and Guideline Requirements

The regulatory environment has been evolving; government entities are either simplifying or changing the requirements for an EVMS. As a reminder, the Capital Programming Guide Supplement to the Office of Management and Budget (OMB) Circular A-11 Planning, Budgeting, and Acquisition of Capital Assets establishes the government major acquisition requirements for an EVMS. This Guide states contractors must use an EVMS that meets the EIA-748 guideline requirements to monitor contract performance. All agency EVMS regulations point to the A-11.

A summary of recent changes follows.

Revolutionary Federal Acquisition Regulation (FAR) Overhaul that began in May 2025 focused on removing most non-statutory rules and rewriting requirements in plain language. Subpart 34.2 – Earned Value Management System was trimmed to the basic EVMS and Integrated Baseline Review (IBR) requirements. The Pre-Award IBR and Notice of EVMS Post-Award IBR clauses were removed; it now just states an IBR is required. Subpart 52.234-4 – Contract Clause for EVMS text was streamlined. Key takeaways: Reaffirmed the value of an EVMS and IBRs. What is unchanged: An EVMS is required for major acquisitions for development contracts, requirements flow down to subcontractors, and IBRs are required.

Defense Federal Acquisition Regulation Supplement (DFARS) Class Deviations (2026-O0011 February 2026), in response to the FAR Overhaul. Subpart 234.2 Earned Value Management System, 234.201 Policy raised the contract value threshold from ≥ $20M to ≥ $50M for EVMS reporting and incorporated the 2015 Class Deviation Memo increasing the contract value threshold for compliance reviews to ≥ $100M. There are also new related Class Deviation Clauses: 252.234-7001 is now 252.234-7998 Notice of EVMS; 252.234-7002 is now 252.234-7999 EVMS.

NASA FAR Supplement 1834.201 Policy Class Deviation (June 2025) as well as their solicitation clause (1852.234-1) and contract clause (1852.234-2) align with the DoD contract value threshold changes and revised clauses.

National Nuclear Security Administration (NNSA). Although NNSA is part of the DOE, as of September 2025 they are the Cognizant Federal Agency (CFA) for NNSA projects. They purposely simplified their compliance and surveillance process to be able to rapidly respond to threats. Contractors self-assess their EVMS. NNSA uses an EIA-748 Guideline checklist, reviews data artifacts, and conducts interviews for evidence of compliance. Certification reviews are required when the Total Project Cost is > $300M and are subject to surveillance reviews.

EIA-748-E Standard for EVMS approved and published in February 2026. This long overdue update reduced the number of guidelines to 27 and reflects current business system capabilities. The previous set of 32 guidelines were revised or merged, two were added, and four were deleted to improve clarity.

With the publication of the EIA-748-E, industry guides as well as government agency compliance and surveillance review materials have been or are in the process of being updated. The NDIA IPMD Intent Guide for EIA-748-E will be available on the NDIA IPMD web site once it completes the membership review and approval process. The DoD Earned Value Management System Interpretation Guide (EVMSIG) is also being updated to reflect the EIA-748-E. Once the EVMSIG is published the DCMA EVMS Group will be updating their Business Practices, appendices, and EVMS Compliance Metrics (DECM). DCMA has already trimmed their DECMs to a set of 60 standard, 10 conditional, and 72 low priority tests.

Impact of BI and AI Enabled Tools and Apps

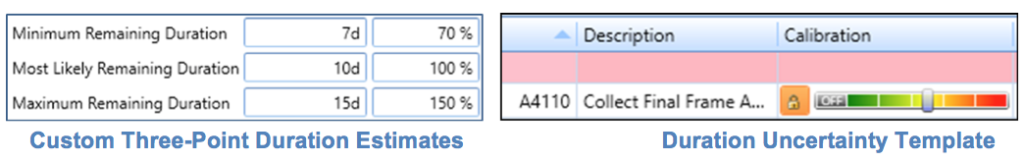

BI and AI tools speed up the process to pull data from different sources for defined use cases and to organize it for analysis. The time lag to view current data can be eliminated with the right business system interfaces and tools. These tools can quickly produce a variety of dashboards or data views with the ability to drill down into the data as well as to sort and filter as needed for root cause analysis. AI agents designed for specific use cases can also speed up the process to organize and present data for real-time decision making. These dashboards and views can be tailored for specific users such as project managers, control account managers (CAMs), functional managers, schedulers, finance, material or subcontract management, and others.

Taking advantage of BI and AI does require a defined enterprise strategy to successfully leverage these powerful tools. Data is the backbone of any AI model – data is needed to “teach” AI how to spot patterns and make predictions. This includes the vast volume of an organization’s transaction records, analytics, and proprietary information across multiple systems.

The problem? Organizations often lack a consistent, verified version of data (the single source of truth) – there is uncertainty about what data should be used to analyze and “feed” their AI models. Internal proprietary data must not be exposed to the outside world. The single source of truth must exist in a governed and curated environment; it must be organized and integrated with a defined data model to be able to analyze real-time streams of data while avoiding multiple versions of the truth.

The challenge is that many organizations are still doing their enterprise planning, including estimating, budgeting and many other functions, in spreadsheets. It is not accessible to others or captured in a common database. Employees end up debating discrepancies between spreadsheets rather than analyzing the data in question.

Once the system that contains the official single source of truth has been determined and how data is organized and integrated, there are a variety of commercial off the shelf (COTS) tools available for the next step. Employees (the power users) familiar with BI and AI tools can quickly turn ideas into apps in a matter of hours or days that help them and their team to get things done. They can quickly build business environment specific dashboards, analyze real-time data pulled from various data sets, and produce outputs designed for different users or use cases.

Putting All the Pieces Together

What are the three primary takeaways?

The requirement to provide a fact-based assessment of project progress and forecast isn’t going away. The FAR overhaul didn’t do away with EVMS or the related fundamental requirements. It does, however, require organizations to be efficiently expert at EVM. A “living” EVMS (i.e., actively maintained and used) that can be scaled/tailored to management needs for each project is essential.

Changes to the requirements provides an opportunity to update “bloated” processes and procedures or that haven’t been updated to reflect new tools. Since the EVMS will need to be reviewed anyway to verify it supports the revised guidelines as well as updated agency requirements, there may be non-value added content or steps that can be eliminated.

BI and AI tools are useful for organizing real-time data into actionable information. Organizations taking advantage of these tools can rapidly respond to realized or emerging risks and changing scope or priorities in response to evolving threats. This creates a competitive advantage.

Returning to a Focus on Proactive Management

This is an opportunity to return to the original objective of an EVMS: timely and relevant information for proactive decision making to ensure project success and a happy customer. The effectiveness of an EVMS should be measured by the technical, schedule, and cost performance metrics. Product acceptance and in-process controls are examples of technical performance metrics. Schedule status and forecast, cumulative to date cost performance index (CPI), estimate at completion (EAC), and the to complete performance index (TCPI) are examples of schedule and cost performance metrics.

Too often the perceived approach to a “compliant” EVMS is to drive the data to an excessive level of detail along with restrictive rules and guidance that result in a system that is cumbersome and painful to use. It reinforces the perception that EVMS is too costly – something the customer doesn’t want to pay for because they don’t see the value.

The alternative? An organization that is efficiently expert at EVM where the customer has directly experienced the value of using real-time performance data to successfully manage their program. Non-value activities have been eliminated. An actively maintained and used EVMS is also resilient; project teams can quickly respond to evolving priorities and threats. Taking advantage of the power and agility of BI and AI tools/apps can help project teams to focus on what matters with real-time data and analytics.

Taking Advantage of the Opportunity to Revitalize EVM

Changing the view that EVMS is burdensome, costly, and of no value will take time. It depends upon organizations choosing to become efficiently expert at EVM.

Recent changes in requirements and the guidelines will require organizations to review the state of their EVM Systems. It creates an opportunity to eliminate non-value added activities. At the same time, powerful BI/AI tools enable real-time data analysis so project teams can be more proactive as well as renovate EVMS functions. The effectiveness of the EVMS is apparent because it provides real-time visibility into project performance with a credible forecast completion date and estimate at completion.

There is no need for excessive oversight by government customers that drives up the cost of managing projects when the customer has confidence the organization’s EVMS provides the visibility they need – and that earned value based project management is a valuable tool.

Next Steps

Consider having an independent third party complete a thorough assessment of your EVMS process areas and documentation to identify where content can be trimmed and clarified or where non-value added steps can be removed – particularly if you are starting to integrate BI and/or AI tools into your EVMS and other business systems. Call us today to get started.

Revitalizing Earned Value Management Systems (EVMS) Read Post »